Full control over your AI applications

Governance and FinOps for your AI agents and applications: Keep track of costs at all times with Token Control and manage access to your AI models efficiently and securely.

Seamless integration with leading AI providers

AI costs always in mind

Managing your AI budgets & limits

Manage the AI budgets of your user groups and users centrally. Define budget limits for departments, applications, projects, or individual users. Distribute your AI budgets according to the needs of your company or organization and avoid unplanned costs.

Monitor & analyze your AI costs

Monitor your AI costs and the budget status of your users clearly and in real time. Get monthly cost reports for your internal billing processes. Analyze your AI consumption based on your user groups, users, and budgets.

Plug-and-play integration with existing AI solutions

Integrate Token Control into your AI solution with our transparent plug-and-play approach. It's simple and requires no additional development effort. And of course, it also works with white duck PrivateGPT Chat.

AI governance under control

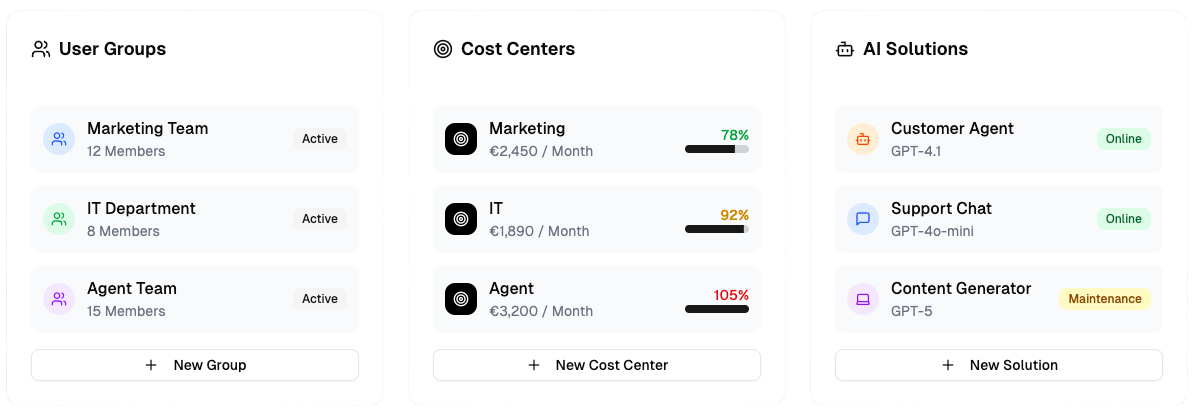

Organization of your business entities

Manage your business entities centrally by clearly structuring user groups, departments, cost centers, or solutions such as AI agents, chats, and applications. Manage access rights and budgets in a targeted manner to ensure transparency, efficiency, and control over your AI models.

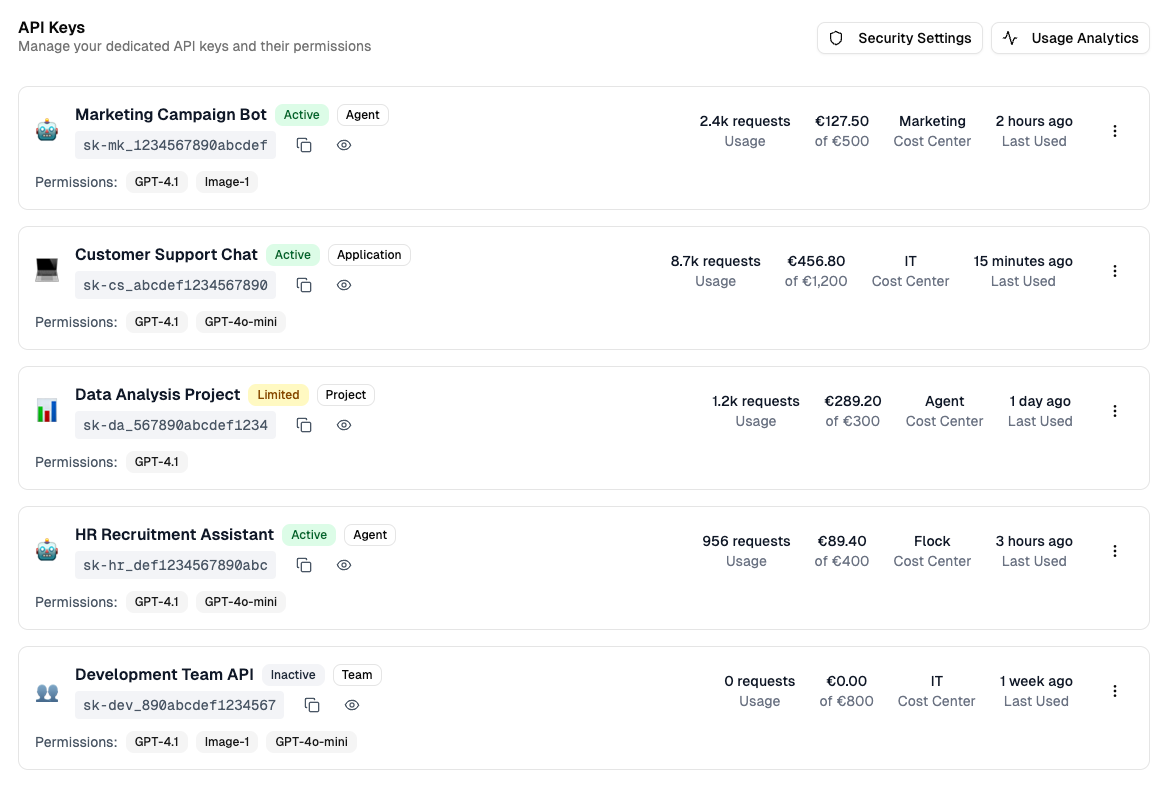

API key management for your AI solutions

Create and manage dedicated API keys for your AI solutions to control access individually and ensure security. Enable targeted use by specific applications, projects, or user groups and maintain an overview of resource control for your AI models.

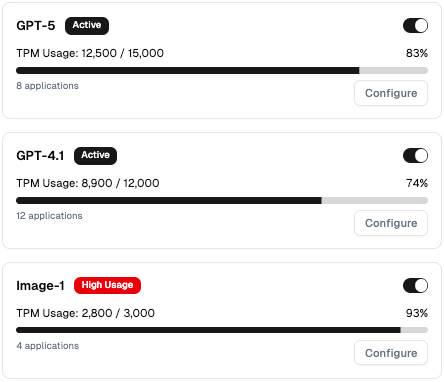

Control access to your LLM models

Monitor and manage access to your LLM models with clearly defined TPM (tokens per minute) limits per application. Set individual usage limits for applications, teams, or projects to ensure regulated resource usage and guarantee optimal performance of your AI models.

Start now

SaaS

For a quick and easy start to your AI management.

Fully managed solution

Integrate with your existing AI solution

Pay-as-you-Go

Data residency in Germany

Privat

For your AI solution with complete data sovereignty.

Deployed in your own Azure environment

Isolated data storage

Tailored to your individual needs

Operated by us

Partners

For your market as our partner.

Dedicated multi-tenant environment

Your individual branding

Integrated with your solutions

Tailored to your business model

With NOVA—our customized AI assistant—we can use AI securely and in compliance with data protection regulations within our company. Thanks to its seamless integration into our corporate environment, intuitive operation, and tailored responses. Working with white duck is always professional and straightforward, meaning short communication channels, quick feedback, and a strong team with diverse AI and cloud native expertise.

white duck GmbH as our innovation partner!

They advise us, provide us with some concepts and a turnkey solution with full cost control for our computer science, business informatics and artificial intelligence students. This enables us to adapt teaching concepts and also provide students with lightweight programming interfaces. The first projects for industrial partners are already underway.

FAQ

Which AI models are supported?

Token Control supports all common LLM models, including:

Azure AI Foundry:

All common OpenAI Modelle, Microsoft Modelle (Phi, model-router), Mistral AI, Meta, DeepSeek, xAI, Nvidia as well as Hugging Face and open source models.

(Azure) OpenAI:

GPT-5 (Chat, Mini, Nano), GPT-OSS, GPT-4.1, GPT-4o, 03-mini, o1, o1-mini, GPT-Image-1, Text-embedding-3

Google Gemini:

Gemini 2.5 (Pro, Flash, Flash-lite)

Mistral AI:

Medium 3.1, Magistral Medium 1.1, Codestral 2508, 3B, Medium 3

Meta Llama:

Llama 4 (Maverick, Scout, Behemoth)

In addition, Token Control supports all open-source LLM models with an OpenAI-compliant API.

How is integration into existing AI solutions implemented?

Token Control can be easily integrated into your existing AI solutions and enables simple, efficient implementation—without any additional development effort.

Seamless integration:

All requests sent to your AI models are processed by Token Control and forwarded unchanged. This preserves the full functionality of your existing systems.

No code changes required:

AI solutions that already use Azure OpenAI / Azure AI Foundry or other supported models can be operated directly with Token Control without any changes to the code. This saves valuable time and resources and enables quick and easy implementation.

Flexible model management and scaling:

Token Control supports the scaling of AI models and the management of multiple model deployments. This allows you to run different models in parallel and respond flexibly to the requirements of your applications, projects, or teams.

Can I integrate Token Control into third-party solutions?

Token Control can be easily integrated into third-party solutions. Thanks to its plug-and-play architecture, it can be quickly and easily integrated into existing AI chats, chatbots, and tools such as GitHub Copilot Chat. You can seamlessly integrate Token Control into your existing systems without any complex adjustments or additional development work.

Integration is achieved through the use of dedicated API keys, which ensure a secure and controlled connection between Token Control and your third-party solutions. These API keys allow you to precisely control access to your AI models and efficiently monitor usage. This gives you full control over your AI resources at all times and enables you to implement governance and cost policies in a targeted manner.

Support for web search or deep search?

Token Control offers comprehensive support for grounding and deep search through seamless integration with leading AI agent frameworks such as FLOCK (our open-source AI agent framework), Langgraph, LangChain, Semantic Kernel, and Autogen. These frameworks enable you to connect your AI models to external data sources, delivering more accurate and contextually relevant results.

Support for web search is a key part of our roadmap and has the highest priority. If you have specific requirements or use cases, please contact us to discuss your needs and work together to develop the optimal solution.

Is RAG (Retrieval-Augmented Generation) and Grounding supported?

Token Control fully and directly supports RAG (Retrieval-Augmented Generation) and Grounding “out-of-the-box.” This allows you to seamlessly connect your AI models to external knowledge sources or databases to generate more accurate and context-aware responses. Thanks to its simple and efficient implementation, you can use these technologies without additional development effort, significantly increasing the performance of your AI solutions.

Is administration possible via Microsoft Entra ID groups?

Token Control supports management via Microsoft Entra ID groups (formerly Azure AD). You can use M365 and security groups to map API keys and business identities such as user groups, departments, or cost centers. This enables centralized and structured control of access to your AI resources based on existing organizational structures.

Is the integration with an API gateway possible?

Token Control can be easily integrated with an API gateway (e.g., Azure API Management). While Token Control solves organizational challenges such as the management and reporting of API keys, user groups, departments, or cost centers, the API gateway handles technical aspects such as routing and load balancing. Together, they offer a comprehensive solution that addresses both organizational and technical hurdles.

Does Token Control offer an MCP server?

An MCP server for detailed analyses and comprehensive reporting is currently in planning and is a central component of our roadmap. With this MCP server, we want to offer you expanded options in the future with the help of AI for overseeing, evaluating, and optimizing your AI usage. This feature is not yet available, but we are working hard to implement it. If you have specific requirements or requests, please contact us—we look forward to incorporating your needs into the development process.

Is Token Control GDPR compliant?

Token Control is fully GDPR compliant and was developed with a clear focus on data protection and data security. All data is processed and hosted exclusively within the EU, using the proven infrastructure of Microsoft Azure. By using Azure's EU Data Boundary, we ensure that all data flows and storage comply with the strict requirements of the European General Data Protection Regulation (GDPR).